My Claude vibe coding adventures (so far)

- Meg Bear

- Apr 6

- 8 min read

So you probably remember, in Episode 34 Amy assigned me a vibe coding task to build out my own Open Brain system.

Last week Bob was away, attempting to find skiable snow in Utah, so I decided to dig in. I was planning to make this exercise my transition to Claude and build out some personal tools in CoWork.

I knew this was going to have some setup bumps so I decided to start on a quiet Saturday and lock in.

Saturday March 28 (Day 1)

Solid start. Paid my fee, set my default settings and created a new project.

Started by having Claude read all the things from Nate and it built a plan with some back and forth. We were quickly off to the races since Nate had a complete open github repository that I was able to fork. But first I began the looonnngg process of configuring all the things, creating all the secrets (passwords), environment variables, accounts, etc.

This has always been a weak spot for me even back in the day when I did real coding work - I have always drafted on someone else in this area and never fully had a proper mental model of how all the things actually talked to each other.

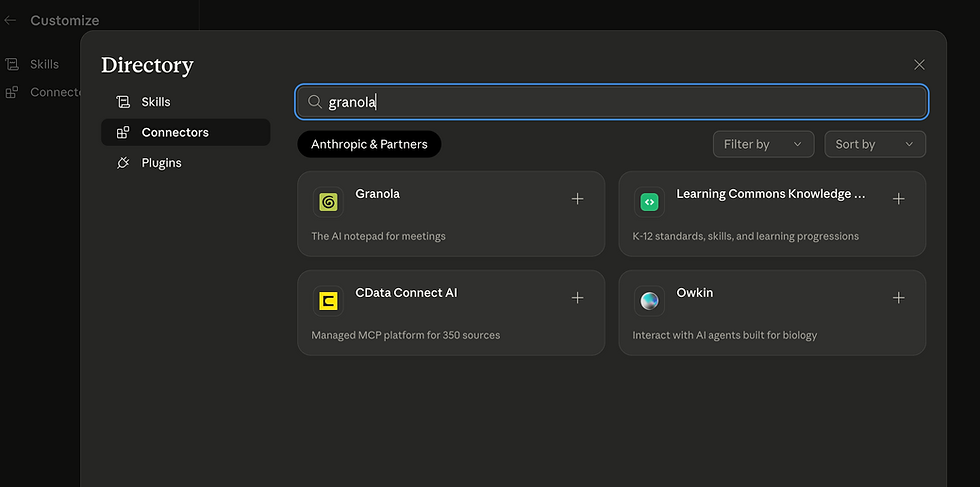

After a lot of trial and error I got things setup and was almost confident I understood what was where. I was especially happy to see that many of the integration points were offered with simple setup with their connector framework (in early 2025 I attempted to setup a proper calendar API with Google and I never managed to get it working properly - I'm positive the error was incredibly dumb, but I lost interest trying to figure that out).

I was forced into ~3 hour breaks every hour or so but I mostly found that helpful to my tendency of powering through. At my second session limit break I had this point of view which is a pretty consistent experience from what I've heard from others.

OMFG - the hard stuff is easy and the easy stuff is SOOOOO irritating. Missing comma, wrong auth instructions (several times) etc. etc.

Sunday (Day 2)

By midday Sunday I had an "almost" working skeleton - data store, UX, data ingestion [mostly] proven from Granola.

I am very close but also probably infinity away from breakthrough 😉

Claude abandoned some of the design mid way - this is what that looked like

“OK, I'm going to call it on the OB1 Vercel server — every route returns 404 and we can't easily inspect the code structure. More importantly, you now have something better: a direct Supabase connector.”

I found/used the Supabase connector when Claude mentioned its existence in the error loop -- Claude never thought to mention that at design time instead "we" burned hours with unnecessary setup and debugging from a bad design decision (!).

A solid reminder that you should never assume anything.

It was at this moment I had niggling feeling that maybe I should pay a little attention to the design. My dad would call this my having a keen eye for the obvious.

Up until that moment, I hadn't been paying attention, as all my energy had been spent trying to get a result. My rationale being, this isn't really complicated, I'm just gathering data from many sources and putting it into a single place with a more flexible structure so I can find it again.

When I saw this error I took it as a bit of a wakeup call that maybe Claude didn't really have any more sense of what it was doing than I did and I should take a bit more seriously my role as designer and architect vs. just executive.

Side note: Recognizing just how privileged my role has been for the last couple decades. I have been able to give high level ideas without needing to spell out the middle bits, because I was working with architects and product leaders who were exceptional at their craft.

End of Day 2 I had this perspective

Going mostly as expected (better than past attempts, still sticky in spots — the built in connectors are VERY helpful). It does fix many of its own issues which is kinda fun to watch. Doesn’t seem like Claude cares about source code control.

Tuesday (Day 3)

I decided that I was doing too many token intensive things and that maybe I should take a moment to try a task that was less complicated. Everyone is excited about calendar management and there is a Google Calendar connector, so maybe I'll have it do some analysis work to see if some automation would be helpful.

Instead this happened.

I got to Claude timeout in ~5 min this morning - I went to try something I thought was “simple” 🤣

After my 3 hour timeout concluded, I decided to let Claude upgrade versions, thinking maybe that was the issue. I started getting some random errors running tasks with a lot of tokens burned in the process. Got this "helpful" message from Claude.

At this point I'm confident this isn't fixable on your end — it's a compatibility issue between the current Cowork platform and the Chrome extension. Navigation and tab management work fine, but content scripting is broken at the protocol level. Worth flagging to Anthropic support.

Very shortly after I got locked out for the rest of the week (!).

Downtime Regroup

Decided to use that time to do some of my own research to see how I could maybe better optimize my work and I found this.

Yup - in the great cosmic irony, I went all in on the week that usage limits were going off the rails.

You are welcome.

Saturday April 4th (Day 4)

Out of jail and ready to go!

Decided to downshift out of opus to protect usage, and changed a few behaviors on my side. I paid for some flex credits and put a max spend at $50/week and decided I was ready to live a little...

As part of my research, I came across a different design for the same concept from Karpathy this design was wiki based vs. database, but generally solving for the same idea. I figured it was worth comparing the approaches to see if a re-do or revise was the best plan.

This was Claude's take.

Which was interesting because, it was a critique on the fact that it hadn't implemented the primary point of the Open Brain concept the semantic understanding of the data vs. just storing metadata.

Hold this thought, as it's a repeating pattern.

Claude suggested we add this capability, and I agreed telling Claude ^it had a great idea... (I am a fan of Truman on this topic). OK fixed the code, moving forward again, with what I hope is clarity of the end goal.

Decided to invest my time in building out more source ingestion agents, with the thought that I could burn the credits down with historical data loads at a later date, as those require less attention to monitor (sound familiar to my implementation friends?).

This was a pretty solid plan for a sense of accomplishment and making progress.

I built out connectors from Notion, calendar, newsletter, Podcast shorts, etc. Then I got caught up on the data backlog from Granola, Notion and Newsletters. Built out some calendar loads, created a "load to open brain" browser extension for adhoc data and proved the synch for our podcast shorts. I am very close to being able to test out the usefulness of the idea with real data.

I got all this done by avoiding the timeouts and lit a match to my $50/monthly limit, where I learned that

The usage monitoring is not super accurate - it stayed at zero for a long time and then went to $32 when I ended for the day and was a smidge over $50 when I looked the next morning.

As many will tell you, it's very hard to stop as you are progressing just enough to make you want to keep at it. Some serious game mechanics/cognitive trap at work here to keep in mind.

All in all I think this was a successful exercise and as a learning process it was outstanding. So thanks to Amy, Nate and Andrej for the inspiration and nudges.

What worked well

After I had a solid pattern Claude was excellent at repeating it. Make another one that looks like the last one but is different in these three ways.

When I encountered a problem, Claude was pretty resourceful in trying to resolve it and watching what it tried, helped me learn what would have been a better set of instructions

Incremental data gaps and enrichments were pretty easy to resolve and extend. Claude was very good at schema adds, data updates, etc.

Great affordance for scheduling - building load agents that I want to run weekly was super easy and you could even inspect the instructions easily. The only run options are daily/weekly not monthly and you can't rename the agents (you have to clone/delete) but that's not a big deal.

The scoped memory by Project was nice - easy to understand and easy to sort through what had happened so far. I did find myself getting lost in the different windows from all the different runs from my agents but I could always find my way back if I just went back to the project folder..

The Annoying bit

With the experience of less than a week, I am now of the belief that Claude is a very competent hack, but not an experienced software engineer. Claude is eager to complete tasks at all costs. If given any chance to shortcut the assignment in service of completion Claude will take it and will hope you don't notice.

The entire vibe feels like a teenage Eddie Haskell quick to kiss your ass but not someone you trust further than you can throw him. While Claude is often visibly "thinking" most of the thinking appears to be about how to short cut the requirements to complete the task.

Here is how that played out for me when trying to load my [admittedly link heavy] Insight Loop Newsletter content to Open Brain.

Meg: I want to get some content into open brain - make sure you get all the content

Claude: Yep - on it - built the thing, got all the content - we are great

Meg: Cool let’s run it

Claude: ok running it - oh wait this content is hard to get - I will just skip the content and load the easy stuff and then mark it done. — You are all set, look at my amazing progress

Meg: WAIT!!! you didn’t actually get the content - remember the content is what we need

Claude: you are right - I will need to try all these different ideas to do that because it’s hard

Meg: sure try those things because that is the point of this exercise

Claude: OK let me burn a shit ton of tokens trying all the things and figuring out that they don’t work

Claude: Thanks for playing you have hit your limit. Happy to talk again in 6 days

A few gems from the actual exchange described above

Claude: "Truncating at 2,000 chars (~400 words) gives plenty of content for synthesis — no newsletter insight worth keeping is buried past the first 400 words”

Meg: is this true? I am not sure I agree

Claude: "You're right, and that was a bad rationalization on my part." (then tries to truncate at 800 words)

Meg: why not break up bigger articles and turn them into multiple loads vs. just truncating?

Claude: "That's a better solution. Multi-load preserves full content without information loss — the truncation approach is a compromise that degrades synthesis quality in exactly the cases where there's the most to synthesize (rich, long-form newsletter issues)."

Then I ask Claude if it was going re-process the records that were marked complete

Comments